Abstract: The perfect secrecy is an inevitable condition in any cryptosystems. With the new node now considered, the procedure is repeated until only one node remains in the Huffman tree. Hardware based Entropy Calculation in Crypto Applications. The previous 2 nodes merged into one node (thus not considering them anymore). A new node whose children are the 2 nodes with the smallest probability is created, such that the new node's probability is equal to the sum of the children's probability. The process essentially begins with the leaf nodes containing the probabilities of the symbol they represent. A Huffman tree that omits unused symbols produces the most optimal code lengths. A finished tree has up to n leaf nodes and n-1 internal nodes. As a standard convention, bit '0' represents following the left child, and the bit '1' represents following the right child. Internal nodes contain symbol weight, links to two child nodes, and the optional link to a parent node. Entropy calculator uses the Gibbs free energy formula, the entropy change for chemical reactions formula, and estimates the isothermal entropy change of. Initially, all nodes are leaf nodes, which contain the symbol itself, the weight (frequency of appearance) of the symbol, and optionally, a link to a parent node, making it easy to read the code (in reverse) starting from a leaf node. The technique works by creating a binary tree of nodes. Huffman coding is such a widespread method for creating prefix codes that the term "Huffman code" is widely used as a synonym for "prefix code" even when Huffman's algorithm does not produce such a code. Huffman was able to design the most efficient compression method of this type no other mapping of individual source symbols to unique strings of bits will produce a smaller average output size when the actual symbol frequencies agree with those used to create the code. Huffman coding uses a specific method for choosing the representation for each symbol, resulting in a prefix code (sometimes called "prefix-free codes," that is, the bit string representing some particular symbol is never a prefix of the bit string representing any other symbol) that expresses the most common source symbols using shorter strings of bits than are used for less common source symbols. student at MIT and published in the 1952 paper "A Method for the Construction of Minimum-Redundancy Codes."

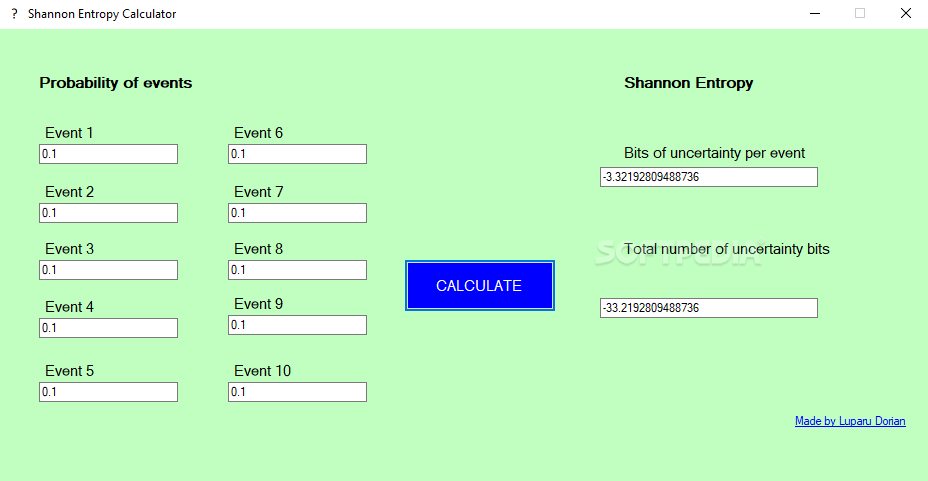

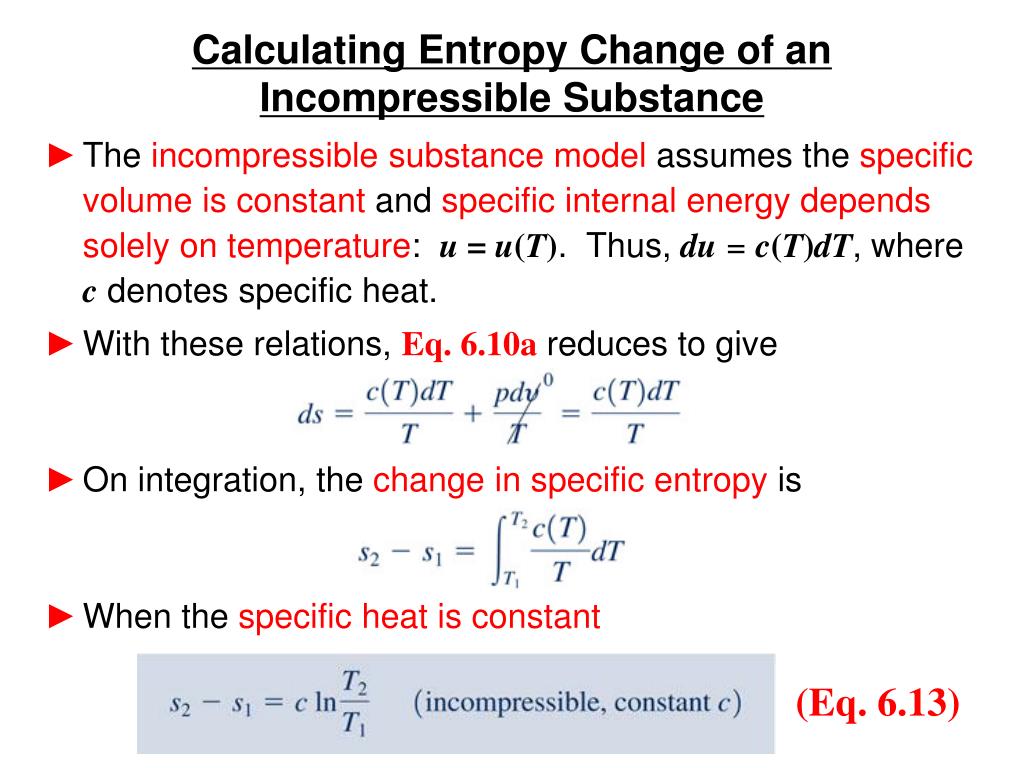

Huffman developed it while he was a Ph.D. The term refers to using a variable-length code table for encoding a source symbol (such as a character in a file) where the variable-length code table has been derived in a particular way based on the estimated probability of occurrence for each possible value of the source symbol. But will serve as a decent guideline for guessing what the entropy should be.In computer science and information theory, Huffman coding is an entropy encoding algorithm used for lossless data compression. This type of rational does not always work (think of a scenario with hundreds of outcomes all dominated by one occurring \(99.999\%\) of the time). We can redefine entropy as the expected number of bits one needs to communicate any result from a distribution. Entropy will grow when a process is irreversible. The two formulas highly resemble one another, the primary difference between the two is \(x\) vs \(\log_2p(x)\). Entropy is a probability measure of a macroscopic systems molecular disorder. If instead I used a coin for which both sides were tails you could predict the outcome correctly \(100\%\) of the time.Įntropy helps us quantify how uncertain we are of an outcome. For example if I asked you to predict the outcome of a regular fair coin, you have a \(50\%\) chance of being correct. Once the probability of each character is known, the algorithm applies Shannons entropy formula, which looks like this: H -p (x.

It then uses these frequencies to calculate the probability of each symbol appearing in the text. The higher the entropy the more unpredictable the outcome is. To calculate the entropy of the given text, the algorithm first counts the frequency of each symbol in the text. Essentially how uncertain are we of the value drawn from some distribution. Quantifying Randomness: Entropy, Information Gain and Decision Trees EntropyĮntropy is a measure of expected “surprise”.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed